Happy Horse 1.0

Happy Horse 1.0

Happy Horse 1.0 Creations

8K, 60fps, cinematic video, faithful to original character: a freckled young woman with loosely tied brown hair in a French retro linen dress, holding a woven basket of wildflowers in a rural garden. She gently looks down at the flowers, brushes the daisy petals, breeze moving her hair and skirt, then looks up at the camera with a soft smile and adjusts the basket. Natural, relaxed, pastoral feeling. Close-up opening, slow zoom, then a slow 360° orbit shot.

8K, 60fps, cinematic video, faithful to original character: a fair-skinned girl with long curly gold-to-mint hair and ice-blue gradient eyes, wearing a French halter satin slip dress and holding a white lace parasol. She stands sideways in sunlight, slowly rotating the parasol as light moves across her face and shoulders. Breeze lifts her hair and skirt, she brushes hair by her ear, looks at the camera with a soft lazy smile, and leans forward slightly. Elegant, airy, sensual mood. Close-up opening, slow zoom in.

8K, cinematic animation, faithful to the original elf girl: long light-golden curly hair, pointed elf ears, emerald green eyes, a green leaf and white flower wreath, gold and emerald accessories, and a white-green elf gown. She stands in a sun-dappled magical forest as a soft breeze lifts her hair and dress. She slowly raises her right hand to touch a falling green leaf, smiles gently, looks softly at the camera, and turns slightly as her skirt moves naturally. Graceful, elegant, healing forest mood. Opening wide shot, slow zoom to upper body close-up, then a slow 360° orbit around her, showing the gown embroidery, accessories, and forest light.

What Makes Happy Horse 1.0 Stand Out?

Text-to-Video Generation

Happy Horse 1.0 is a better fit for users who want more directed text to video results instead of broad, generic outputs. It is especially useful when prompts include scene structure, camera movement, lighting, and subject action, because control at the prompt level is one of the clearest reasons people are comparing this model to other newer AI video tools.

Image-to-Video Animation

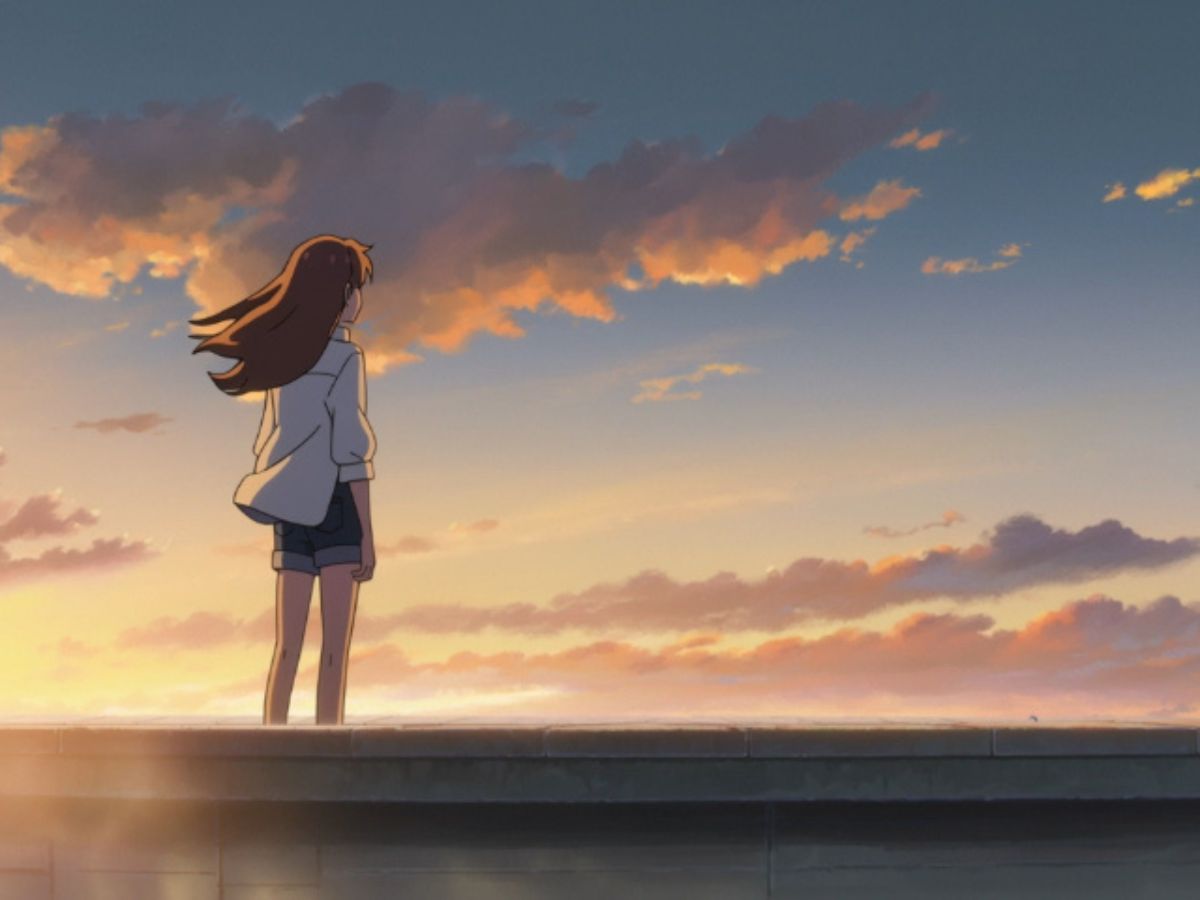

The image to video workflow is useful when users need stronger visual consistency across a character, product, or scene. Compared with models that drift more easily from the source look, Happy Horse 1.0 is more relevant for reference-led generation where composition, design cues, and overall style need to stay closer to the starting image.

Multi-Shot Motion and Scene Coherence

One of the more notable Happy Horse 1.0 strengths is that it is not only judged on single-shot quality. It is also relevant for short sequences where motion needs to feel smooth and shot-to-shot transitions need to stay readable. That makes it more useful for structured storytelling than models that can produce a strong first frame but lose coherence as the clip develops.

Audio-Aware Video and Lip Sync Workflows

Another reason Happy Horse 1.0 is being discussed is its support for audio-aware generation and lip sync workflows, which gives this model a more specific identity than a standard silent video generator. For users exploring newer AI video tools on OCMaker AI, this matters because dialogue, vocal timing, and sound-led scenes are often where model differences become easier to evaluate.

Why Use Happy Horse 1.0?

Text to Video with Strong Prompt Control

Image to Video for More Consistent Results

1080p AI Video Output

Multi-Shot Video Generation

Natural Motion and Scene Coherence

Online Access and API Workflow

How to Use Happy Horse 1.0

Choose the Happy Horse 1.0 Model

Start by selecting Happy Horse 1.0 in the AI video workflow. This step matters because the model is best suited for users testing text to video, image to video, motion quality, and more directed scene generation.

Add Your Prompt or Reference Image

Enter a clear prompt, upload a reference image, or combine both depending on the result you want. More specific inputs usually make it easier to guide subject appearance, scene structure, lighting, and overall visual consistency.

Generate and Review the Video

Generate the video, preview the result, and review whether the motion, scene coherence, and character consistency match your goal. If needed, refine the prompt or source image and run another test for a more controlled output.

What Creators Are Saying